A semantic layer for your codebase

Give your AI a map, not a maze.

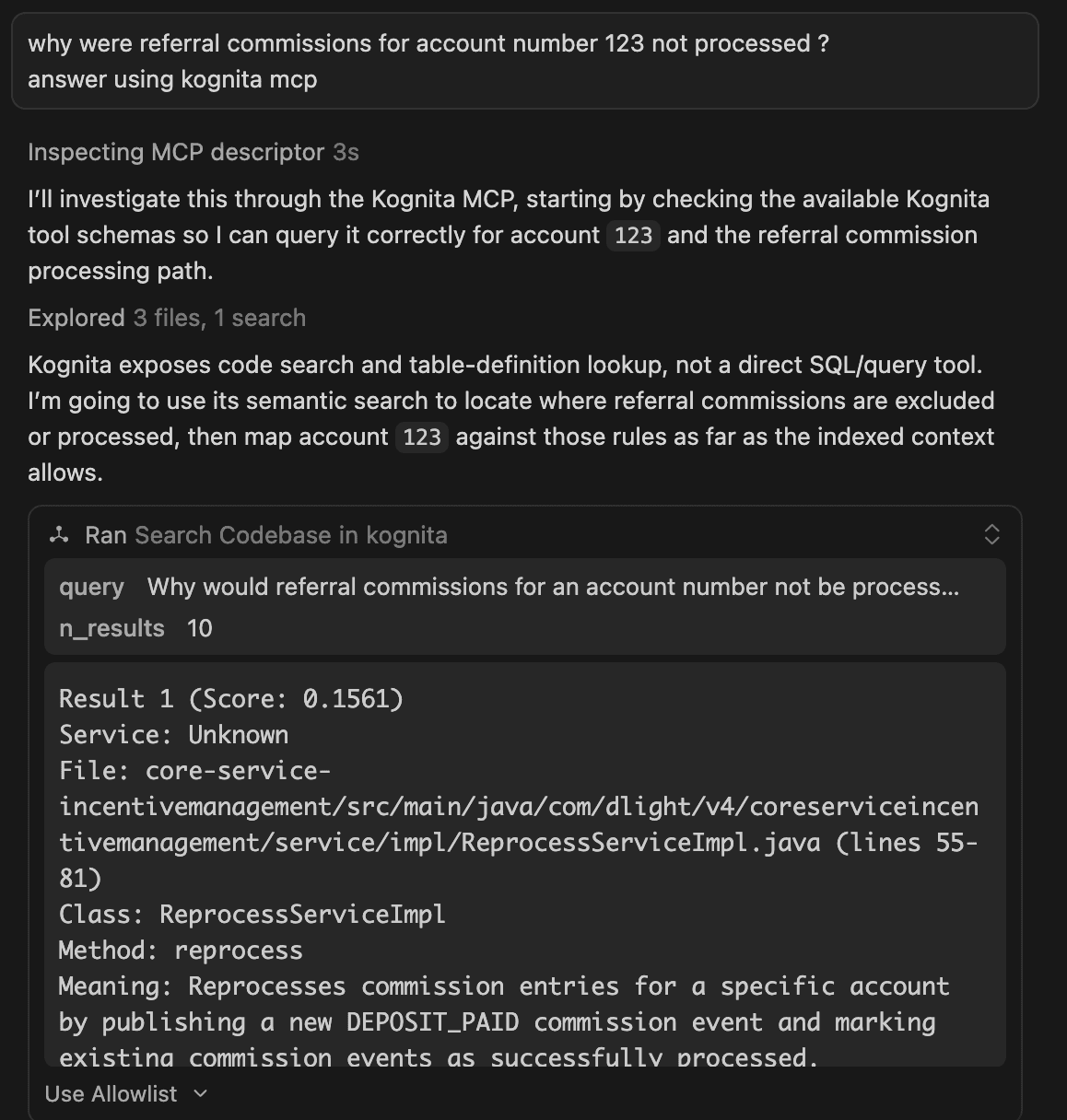

AI tools waste time and tokens re-discovering structure you already understand. Kognita gives them a queryable semantic layer and call graph to start from.

Plugs in via MCP — Claude Code, Cursor, Codex and more.

✦ Up to 50% smaller context window on every session

trusted by engineering teams at

call graph database

Your code becomes a call graph — every method, every link, queryable by AI

Every function, import, and dependency becomes a connected node — your entire codebase in one queryable graph.

AI traces complete call flows without reading a line — it just follows the edges.

semantic retrieval

Right context. Right time.

Your code is taken, intelligently chunked into logical units, meaning is extracted — then advanced retrieval algorithms make sure AI gets exactly what it needs, the moment it needs it.

Intelligent chunking

Code is split along real semantic boundaries — functions, classes, modules — not arbitrary line counts. Every chunk is a meaningful unit.

Meaning extraction

Intent is extracted, not just syntax. Each chunk is understood in the context of your whole system — what it does, not just what it says.

Precision retrieval

Advanced information retrieval algorithms surface exactly the right context at the right moment — so AI never guesses, never wanders.

plug in via mcp

One command. Every AI tool, connected.

Kognita speaks MCP — the open standard for connecting AI tools to external context. Add it once and every session starts with your full semantic layer already loaded.

$ claude mcp add-json kognita-mcp '{"type":"http","url":"https://kognita.is/mcp","headers":{"Authorization":"Bearer <your-token>"}}'

{ "mcpServers": { "kognita-mcp": { "type": "streamable-http", "url": "https://kognita.is/mcp", "headers": { "Authorization": "Bearer <your-token>" } } } }

mcp_servers: kognita: type: streamable-http url: https://kognita.is/mcp headers: Authorization: "Bearer <your-token>"

Also works with Windsurf, Zed, Continue, and any other MCP-compatible client.

context from the first message

Every chat kickstarts with full context — not a cold start.

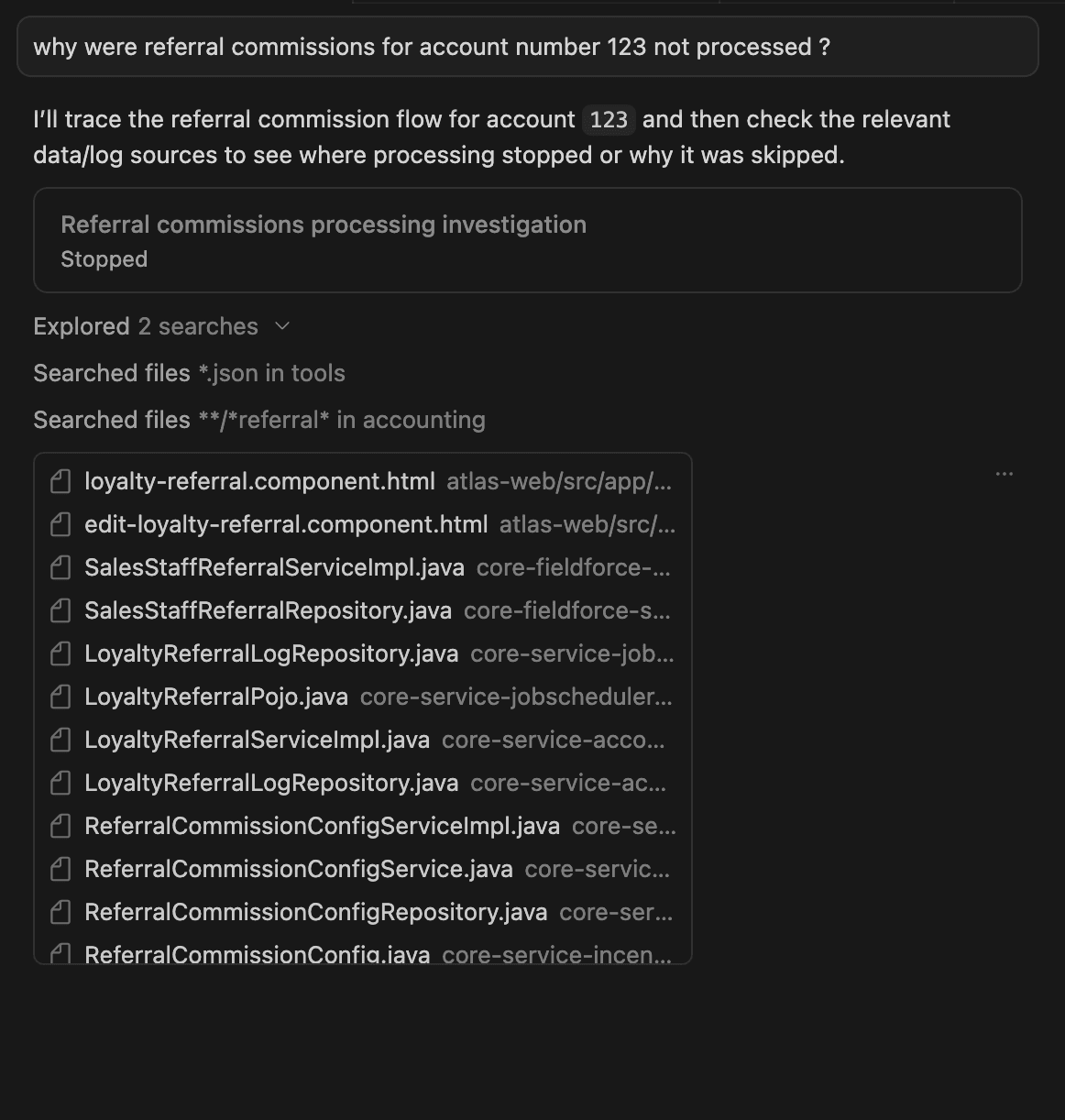

Without Kognita, AI wanders — reading files it doesn't need, missing context it can't find, burning tokens to rediscover structure you already understand.

AI reads file after file trying to orient itself — missing dependencies, guessing structure, wasting your context window before any real work begins.

The semantic layer is already loaded. AI knows the structure, the call graph, the intent — and starts solving the problem from message one.

how it works

Up and running in four steps.

Connect your repo

Grant Kognita read access to your codebase via GitHub, GitLab, or any Git provider. Nothing is stored beyond what's needed for indexing.

Trigger indexing

Start a full index from your Kognita dashboard. We parse your code into a semantic graph — functions, dependencies, call chains, intent.

Get your MCP endpoint

Once indexed, you receive a personal MCP endpoint and token. Your semantic layer is live and queryable.

Connect your agents

Add the MCP server to Claude Code, Cursor, Codex, or any compatible client. Every session starts with full context from message one.